The Ultimate Guide to Conversion Rate Optimization for Any Business

Whether you’re a seasoned marketer or just starting out, conversion rate optimization (CRO) is a powerful tool that can boost your sales, leads, and overall

Over the past decade, the SERPs have undergone some serious transformations.

From heavy-hitting March and June core algorithm updates to BERT’s nuanced take on search queries, the search engine has upped the ante on content quality and relevance.

Going into 2020, marketers need to get to know all 167 pages of the Quality Rater Guidelines, a suggestion Google’s Danny Sullivan has mentioned several times throughout the year.

In this article, I’ll go over the most significant Google updates and events of 2019 and the impact they had on search.

Let’s start at the beginning.

Kicking off the year, Google’s so-called Valentine’s Day update brought positive changes to many websites.

Back in February, Search Engine Journal wrote that SEOs initially thought the update was targeting niche sites, as well as quality indicators.

However, Google revealed that the update was less about addressing quality issues and more about delivering relevant content to users.

Sistrix found that sites like irs.com (not the IRS’s actual website, irs.gov) and dmv.org (again, not the “real” DMV) saw significant drops starting around January 31st.

For the average website, Valentine’s Day didn’t bring too much drama. The key takeaways here are focusing on both quality and intent.

All pages must have a distinct purpose, and that purpose must also offer a benefit to the user.

Pages must also have the appropriate expertise, though what that means varies by category.

The March 12th Google update was the first major one of the year, affecting many of those still reeling from the Medic Update of August 2018.

The “March 2019 Core Algorithm Update” or “Florida 2”, was labeled “big” by Google, though even today, it’s a bit difficult to find a definitive answer on which issues this update targeted.

Though Google has always remained firm that broad core updates focus on relevance factors, not specific niches or websites, rumors have been floating that suggest the algorithm did target specific types of content.

According to RankRanger, YMYL content, as well was niche industries like Home and Garden and Travel were hit with the same amount of force. The site found that YMYL categories like Finance and Health were just as volatile as other niches.

While not specifically out to “get” YMYLs, Florida 2 did have a significant impact on these categories. Google continues to tweak its algorithm to deliver credible information when someone enters a “sensitive” query.

After the E-A-T Update (Medic), in August and the E-A-T follow-up in October 2018, March 2019 brought some major losses to some well-known health sites.

Sites like everydayhealth.com and verywellhealth.com reportedly lost about half of their SERP visibility following the update.

What’s interesting here is that other health websites like draxe.com benefitted from its competitors’ losses. Previously, Dr. Axe was hit hard in the EAT updates, likely due to claims that don’t square with “medical or scientific consensus.”

Florida 2 also revealed that the algorithm favors websites that can provide a high level of trust. Given the sensitive nature of YMYL content, this is particularly notable for keywords related to health or financial information.

Another revelation here is branding became a big deal. For niche sites, regardless of quality, this meant losing even more ground to big name competitors, who tend to collect more clicks than the little guys.

On Friday, April 5, 2019, several website owners reported pages mysteriously disappearing from the SERPs.

As it turns out, about 4% of the Google index was hit by the bug, which turned out to be the result of a technical error.

Well, Google fixed the bug and for the most part, rankings were restored. Ultimately, human error was to blame, here. From Search Engine Journal, here’s how it all went down:

Obviously, it’s out of your hands if a Google developer deletes an important piece of code.

The key takeaway here is that if you believe your URLs have been deindexed, try manually reindexing in Google Search Console using the platform’s URL inspection tool.

Keep in mind, the tool comes with daily limits and the process can take up a considerable chunk of time. Focus your efforts on your most important pages first, and go from there.

Less than two months after the April deindexing debacle, May brought another indexing bug to the SERPs. This time, however, new content wasn’t being indexed.

According to Barry Schwartz, the bug was immediately clear when entering a big name publisher into the search box—think the New York Times or the Wall Street Journal.

This time around, it seems that the problem was caused by infrastructure or algorithmic updates. In other words, no one deleted the code.

Ultimately, the May indexing bug was resolved within a few days.

News sites that make frequent appearances in Google’s featured stories section likely saw some big losses, but regained position after the fix.

On June 2, Google issued a warning on Twitter announcing an upcoming update.

The announcement, as mentioned here, stated that their “guidance on such updates remains as we’ve covered before.”The next day, Google confirmed via Twitter that the latest core algorithm update was live across all of the search giant’s Google’s data centers.

Frustratingly, Google Liaison Danny Sullivan linked to a 2018 post stating that post-update, there is usually nothing to fix. The post notes that most sites see “little noticeable change.” You know, business as usual.

Except… June’s update was a big one. So big it came with a heads-up from Danny Sullivan to help website owners brace themselves.

The Core update hit websites who felt the impact of Google’s August 2018 Medic update. Many of the same sites saw rankings continue to fall, while others reported some solid recoveries.

According to Google, losses aren’t designed to be a penalty for spammy content, but they may signal a relevancy problem or a weak brand profile. Or, maybe your writers aren’t quite as prolific as those who write for your competitors.

As was the case with the March algorithm update, June brings some similar lessons to the table. We’re seeing more smaller sites get the shaft, regardless of quality.

This update highlights the importance of building a consistent brand across multiple channels. A 2018 report from eConsultancy found that 82% of searchers click on the most familiar brand in the search results first.

The report also found that those clicks are typically based on factors that exist outside of the SERP environment:

Strategies like targeting high-intent keywords, branded search terms, and going omnichannel are becoming more important than ever.

In addition to the core update, June brought us another change: diversity.

The site diversity update was unrelated to the broad core update and aimed to serve users with results coming from several different domains. For searchers, diversity means more choice on the front page.

In an increasingly crowded SERP space, sites with a domain monopoly push other sites further in the rankings, taking over the full front page. Google announced that the update would aim to display no more than two results from the same domain.

However, Google does state that when appropriate, they may exercise their right to show more than two listings from the same domain, if it happens to best match searcher intent.

Additionally, the diversity effort doesn’t extend to SERP features like the Knowledge Graph or Image Carousel. It applies strictly to traditional, organic listings.

As it turns out, the diversity update didn’t have a huge impact on website rankings, though it’s hard to say for certain how much of an impact it truly had given the close proximity to the broad core algorithm update.

Moz reported that sites like Amazon with strong brand or navigational intent still dominated the front page. Still, they noted that those sites that previously occupied 3-5 front page results dropped down to two or three per page.

The takeaway here is the diversity update has evened the playing field for small to mid-sized sites. However, the biggest brands still come out on top pretty consistently.

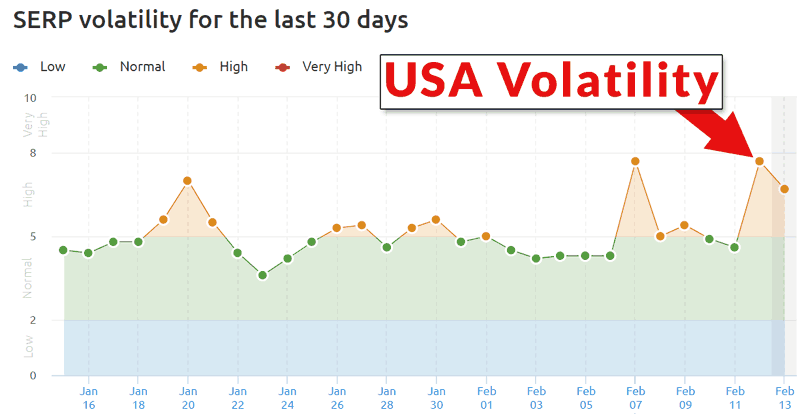

When September’s core algorithm update rolled out, things were initially pretty quiet.

Google didn’t tweet out an announcement this time to alert SEOs like it did in June, and many sites that suffered during 2018’s E-A-T updates saw some recoveries.

However, a couple of days in, surges and drops began to hit the SERPs. As we saw in March and again in June, health and medical sites saw the biggest changes.

RankRanger data found that Travel sites and YMYL categories saw the most significant volatility following the update.

They also noted that many of the health websites hit by last year’s Medic update regained some (not all) of their traffic.

In my September Core update analysis, I found that many pages lost ranking on long-tail keywords. Top terms didn’t see much change, but longer tail terms that rank in the 21+ positions took a hit.

Here’s a screenshot I posted initially, showing the “type of keywords” I’m referring to:

These types of keywords are typically considered the low-hanging fruit of ranking opportunities; in other words, easy to squeeze into a blog post and outrank the competition.

The key takeaway here is that Google is further refining its ability to deliver relevant results.

For marketers, September’s update means it’s time to bring your A-game to every target search term, regardless of who else is competing.

In October, we were introduced to BERT, Google’s relevance update.

BERT was designed to help Google get a better sense of searcher intent by picking up on the nuances and context of the words used in searches. That way, it can provide the most relevant answers possible to a given search query.

Google uses the following example to explain what this means:

In one example of a search for “2019 brazil traveler to usa need a visa,” the word “to” and its relationship to the other words in query are important for understanding the meaning.

With BERT, that newfound ability to grasp nuance has finally addressed one of the most frustrating aspects of Search. Previously, Google wouldn’t have understood the importance of this connection and would return results about U.S. citizens traveling to Brazil.

BERT is certainly an interesting update. While there’s not a whole lot you can do to optimize for the algorithm directly, it does mean that you need to get serious about quality.

Google recommends writing content with users’ interests in mind, targeting long-tail, conversational keywords that align closely with the purpose of the page.

Because Google now understands how words like “to” or “with” modify meaning, you’ll need to be mindful of the terms you choose to target.

Additionally, BERT has upped the ante on relevance. Google’s Danny Sullivan recommends sticking to one topic per page for your best chance of ranking.

BERT also impacts featured snippets. Per Dr. Pete, any update that impacts how Google interprets search queries will impact rich results, as they’re still part of organic search.

Google finally confirmed the November algorithm update via Twitter on December 2, 2019.

This update, called Nov. 2019 Local Search Update, brought neural matching to local search results.

While Google has been applying neural matching to search for over a year, this is a new addition to local.

According to Google, the change will impact features like Google My Business listings, the map pack, and local-specific content, but ranking criteria will largely remain the same.

As we’ve seen previously with BERT, the Local update is all about analyzing queries to deliver the content best matched to user intent.

That said, despite the similarities, Danny Sullivan has confirmed that the two updates are not related. Neural matching and BERT are not the same algorithm.

The November update has impacted a broad range of industries and niche sites. According to Search Engine Roundtable, some websites saw traffic change by as much as 30%-50%.

In this case, neural matching helps Google understand when user queries contain local intent, even if they don’t include a specific business name or description.

Google’s guidance follows the usual bullet points; reiterating that local results are still determined by three key factors: distance, prominence, and relevance.

Local businesses don’t need to make changes to their sites, but should continue to follow best practices like completing their entire GMB profile, applying relevant schema, and ensuring all directory listings are up-to-date and consistent.

Between BERT and the subsequent local update, Google is getting serious about intent, something I suspect will continue long-term.

Given the continued losses seen by health and wellness sites, the fight against misinformation is likely to continue for the foreseeable future.

While you never know for sure what’s next when it comes to Google algorithm updates, based on what’s happened this year–and even 2018–SEOs should continue to focus on improving relevance, quality, and brand visibility.

Or, as Google’s Danny Sullivan says, just “create great content.” You can start by reading the Quality Rater Guidelines over the holidays.

Welcome to John Lincoln’s personal website. On this website, we offer courses by John Lincoln, review software, publish articles and videos. You can also learn about John Lincoln’s books, films, book him to speak and contact him. John is directly associated with many of the businesses mentioned on this website and freely discloses this information.

John Lincoln is CEO of Ignite Visibility, one of the top digital marketing agencies in the nation. Ignite Visibility is a 4x Inc. 5,000 company. Ignite Visibility offers a unique digital marketing program tied directly to ROI with a focus on using SEO, social media, paid media, CRO, email, Amazon and PR to achieve results. Outside of Ignite Visibility, Lincoln is a frequent speaker and author of the books “Digital Influencer” and “The Forecaster Method.” Lincoln is consistently named one of the top digital marketers in the industry and was the recipient of the coveted Search Engine Land “Search Marketer of The Year” award. Lincoln has taught digital marketing and Web Analytics at the University of California San Diego since 2010, has been named as one of San Diego’s most admired CEO’s and a top business leader under 40. Lincoln has also made “SEO: The Movie” and “Social Media Marketing: The Movie.” His business mission is to help others through digital marketing.

Want to get in touch with John Lincoln? Click Here To Reach Out.

Whether you’re a seasoned marketer or just starting out, conversion rate optimization (CRO) is a powerful tool that can boost your sales, leads, and overall

Feeling overwhelmed by the sea of SEO companies out there? You’re not alone! Choosing the right partner is crucial for achieving your online marketing

In 2024, business blogging is not only still relevant – it’s a critical component of any content marketing strategy. Companies that blog generate 67% more

Over 1,000 participants were surveyed for this study. Download now. ©2020 Ignite Visibility. All Rights Reserved. Source: https://ignitevisibility.com/wp-content/uploads/2020/10/Ignite_Ecommerce-Holiday-Study4.pdf We recently surveyed 1,000 consumers about